What is a half cycle path? A half cycle timing path is one in which launch and capture happen on different clock edges. A half cycle path can be in terms of both setup and hold. However, normally, in technical terms half cycle path is the one which has setup check getting formed as half cycle. For instance, following are some of the examples of half cycle timing paths:

- A timing path from positive edge-triggered flip-flop to a negative edge-triggered flip-flop and vice-verse. Here, hold check is also half cycle on the previous edge

- A timing path from a positive level-sensitive latch to a negative level-sensitive latch and vice-verse. Here, hold check is zero cycle

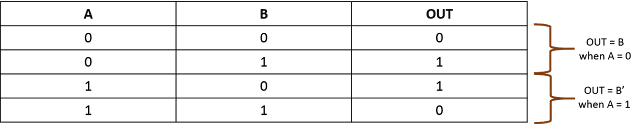

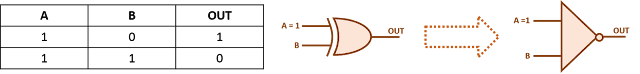

- A timing path from a negative edge-triggered flip-flop forming a clock gating check on AND gate (Here, hold check is zero cycle)

- A timing path from a positive edge-triggered flip-flop forming a clock gating check on OR gate (here, hold check is zero cycle)

There are also, some cases where hold check is half cycle and setup check is single/zero cycle. These are:

- A timing path from a negative edge-triggered flip-flop forming a clock gating check on OR gate (Here, setup check is single cycle check)

- A timing path from a positive edge-triggered flip-flop forming a clock gating check on AND gate (Here, setup check is single cycle check)

In addition, minimu pulse width checks should also be considered same as half cycle timing paths. But, in this case, start-point and end-point are the same register.

In this post, we will be considering only setup timings paths as example, although the complete discussion applies on all kinds of half cycle setup paths/checks. To start with, let us note down the most simple setup check equation for half cycle timing paths.

Tck->q + Tprop + Tsetup < (Tperiod/2) + Tskew

Let us now discuss some of the intricacies that we should be aware of while dealing with half cycle timing paths:

Clock source duty cycle variation: There is always a variation in duty cycle of the clock source due to uncertainty in the relative timings of positive and negative edges. Duty cycle variation is always measured with respect to corresponding positive and negative edges. In other words, we can also say that duty cycle variation is the uncertainty in arrival of negative edge, given that positive edge has arrived at certain fixed point of time. Let us take an example. If we are given a clock with a period of 10 ns with ideal 50% duty cycle. Also, we are given that it has the clock has a duty cycle variation of +-5%. So, if we say that we saw positive edge of clock at 100 ns, we can expect to see negative edge of clock at any time between 14.5 ns and 15.5 ns. Following waveform illustrates this. You can read my earlier post duty cycle variation to have a more detailed elaboration.

So, the setup check equation modifies as:

Tck->q + Tprop + Tsetup < (Tperiod/ 2- Tsdc) + Tskewwhere Tsdc is the clock source duty cycle variation. Thus, the effective half clock period reduces by an amount equal to duty cycle variation.

Duty cycle degradation: In addition to source duty cycle variation, there can be assymmetry in rise delay vs fall delay of clock elements. For instance, a buffer may have nominal rise (0 -> 1) delay of 50 ns whereas 48 ns for fall delay (1 -> 0). So, if a clock pulse passes through it, it will eat a portion of this clock pulse as shown in figure 1 below. For more clarity, we have exaggerated the scenario with a fall delay of 30 ns.

So, a half cycle may be larger of smaller than actual half cycle at the clock pin. In the above case, positive to negative edge setup check will be tighter by 20 ns and negative-> positive setup check will be relaxed by same amount (neglective OCVs as of now). So, the modified setup equation, now, becomes:

Tck->q + Tprop + Tsetup < (Tperiod/2 - Tsdc) + (Tskew - Tdcd)

As discussed above also, Tdcd can be positive or negative depending upon if rise-fall variation of cells is helping or oppsing.

Can you think of some other scenario that is specific only to half cycle timing paths? Do share, if you do.